A while back, we looked at using Testcontainers for Integration Testing purposes, for your dotnet applications. If you haven’t read those, you can check out these links, below:

Integration Testing with Dotnet Testcontainers – Part 1

Integration Testing with Dotnet Testcontainers – Part 2

The approach described in those articles dictates that we use Testcontainers to new up a brand-new database for each test file. The challenge with that approach is that it can potentially get slow, depending on the heft of your database system and the number of test files you are maintaining. There is certainly some overhead involved, even with docker, to spin up new SQL Servers and disposing each of them.

Today, let’s look at an alternate approach: using a common, singular database to run your entire suite of tests or perhaps a subset, but that are still dispersed in multiple files. Historically, the main drawback with this approach was that we’ll have to manage the test data. For the purposes of one test, you setup data in a particular way but when you move on to the next test, you’ll have to reset the state so that the previous test data doesn’t affect your current test. Managing the test data on your own can get tedious and errorprone. Today, we’ll look at a nifty library called Respawn, to aid us in this regard.

A Working Example

For the purposes of this article, I have created a simple Create/Read/Update/Delete (CRUD) API that allows you to add, get, updated and remove employees. There is also a fifth endpoint allowing you to fetch all employees. You can check out the fully finished example from my GitHub, here:

https://github.com/tvaidyan/respawn-demo

Here are some things to note about this application:

- This example also uses Testcontainers to create a SQL Server database via docker for running these integration tests on. You can dig into the details of that in the

EmployeeApiFactory.csclass. Also, check out my articles on Testcontainers (links above), if you’re unfamiliar with them. - In that alternate/previous approach, we used this in conjunction with xUnit’s

IClassFixtureto setup a database per test file. However, in this example, we are using xUnit’sICollectionFixtureinterface. This construct will allow us to share some context between multiple test files. You can learn about shared contexts with xUnit from their documentation. In this particular case, we want to use this construct to create a single database that we want to share for all the tests associated with all endpoints of the Employees API. I’ve wired this up in theSharedDbCollection.csfile.

[CollectionDefinition("EmployeeDbCollection")]

public class SharedDbCollection : ICollectionFixture<EmployeeApiFactory>

{

}

As you can see, there is not much happening in this file. But the two relevant things here are:

- I’m defining a

CollectionDefinitionname – EmployeeDbCollection. Whatever file where I want to share this context (i.e., our database), I decorate that file with an attribute calledCollectionreferencing the same “EmployeeDbCollection” name that I’m defining in this file, here. ICollectionFixtureis a generic interface and I’m supplying it with myEmployeeApiFactorytype. This class has all the code that is responsible for creating the SQL Server database in docker and running the database migrations.

Now with this setup in place, I can run all my tests and they will all be run against a single instance of SQL Server. That’s quite helpful in this case because it greatly reduces the overall time it takes to run these tests as it doesn’t have to content with the overhead of creating and destroying databases for each test file.

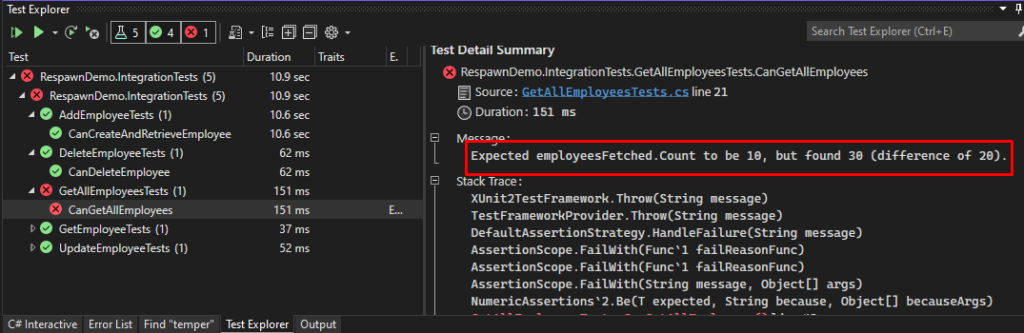

However, this brings about the other problem that I mentioned earlier about data pollution from one test to the next. Since we don’t have a separate database for each test file, the data from one test remains in the database when the next test executes, and that pollution can interfere with the assertions we’re making. For example, in my CanGetAllEmployees test method, I create 10 new employees, call the “Get All Employees” endpoint and assert that I have 10 results. However, this test fails because there were other employees in the database that was created during the setup of other tests, in other files.

Enter Respawn

This is where the Respawn library comes in. It will allow us to do a reset before each test, or each grouping of tests, deleting all the test data, giving us a clean slate for every subsequent test. You can add it to your project from NuGet: dotnet add package Respawn.

This library is created by Jimmy Bogard. You may know Jimmy from his other popular packages such as MediatR and AutoMapper. This nifty utility allows you to intelligently delete data from your test database, to get it back to a clean state, so that you can import test data and run additional tests against it. You can also specify a list of tables to be excluded from the deletion process.

This approach has a potential advantage over the previous approach that we looked at where we started from a new database each time. Since we are not creating new databases before each test, it can potentially improve the overall time necessary for running your entire suite of tests. However, on the flipside, since we’re dealing with the same database instance for all our tests, the tests will be run serially, as opposed to parallel tests we were achieving with the one-database-per-test approach. You’ll have to evaluate for yourself, based on your own unique situation, which of these two approaches work for you.

Initializing Respawn

Here’s the EmployeeApiFactory class in its entirety:

using DotNet.Testcontainers.Builders;

using DotNet.Testcontainers.Configurations;

using DotNet.Testcontainers.Containers;

using DotNet.Testcontainers.Networks;

using Microsoft.AspNetCore.Hosting;

using Microsoft.AspNetCore.Mvc.Testing;

using Microsoft.AspNetCore.TestHost;

using Respawn;

using System.Data.Common;

using System.Data.SqlClient;

using Xunit;

namespace RespawnDemo.IntegrationTests.Shared;

public class EmployeeApiFactory : WebApplicationFactory<IApiMarker>, IAsyncLifetime

{

private Respawner respawner = default!;

private DbConnection dbConnection = default!;

private MsSqlTestcontainer dbContainer = default!;

private TestcontainersContainer grate = default!;

private IDockerNetwork testNetwork = default!;

private readonly string databaseName = "IntegrationTestsDb";

private readonly string databasePassword = "yourStrong(!)Password";

private readonly string databaseServerName = "db-testcontainer";

public string DatabaseConnectionString

{

get

{

var connStr = this.dbContainer.ConnectionString.Replace("master", databaseName);

return connStr;

}

}

public HttpClient HttpClient { get; private set; } = default!;

protected override void ConfigureWebHost(IWebHostBuilder builder)

{

builder.ConfigureTestServices(services =>

{

services.SetupDatabaseConnection(DatabaseConnectionString);

});

}

public async Task InitializeAsync()

{

// Create SQL Server and wait for it to be ready.

const string started = "Recovery is complete. This is an informational message only. No user action is required.";

const string grateFinished = "Skipping 'AfterMigration', afterMigration does not exist.";

using var stdout = new MemoryStream();

using var stderr = new MemoryStream();

using var consumer = Consume.RedirectStdoutAndStderrToStream(stdout, stderr);

var dockerNetworkBuilder = new TestcontainersNetworkBuilder().WithName("testNetwork");

testNetwork = dockerNetworkBuilder.Build();

testNetwork.CreateAsync().Wait();

dbContainer = new TestcontainersBuilder<MsSqlTestcontainer>()

.WithDatabase(new MsSqlTestcontainerConfiguration

{

Password = databasePassword,

})

.WithImage("mcr.microsoft.com/mssql/server:2019-CU10-ubuntu-20.04")

.WithOutputConsumer(consumer)

.WithWaitStrategy(Wait.ForUnixContainer().UntilMessageIsLogged(consumer.Stdout, started))

.WithNetwork(testNetwork)

.WithName(databaseServerName)

.Build();

await dbContainer.StartAsync();

// Create grate container for sql migrations. Run migrations on SQL server.

var sqlMigrationsBaseDirectory = Path.Combine(Environment.CurrentDirectory, "sql-migrations").ConvertToPosix();

using var gratestdout = new MemoryStream();

using var gratestderr = new MemoryStream();

using var grateconsumer = Consume.RedirectStdoutAndStderrToStream(gratestdout, gratestderr);

var grateBuilder = new TestcontainersBuilder<TestcontainersContainer>()

.WithNetwork(testNetwork)

.WithImage("erikbra/grate")

.WithBindMount(sqlMigrationsBaseDirectory, "/sql-migrations")

.WithCommand(@$"--connectionstring=Server={databaseServerName};Database={databaseName};User ID=sa;Password={databasePassword};TrustServerCertificate=True;Encrypt=false", "--files=/sql-migrations")

.WithOutputConsumer(grateconsumer)

.WithWaitStrategy(Wait.ForUnixContainer().UntilMessageIsLogged(grateconsumer.Stdout, grateFinished));

grate = grateBuilder.Build();

await grate.StartAsync();

dbConnection = new SqlConnection(DatabaseConnectionString);

HttpClient = CreateClient(new WebApplicationFactoryClientOptions { AllowAutoRedirect = false });

await InitializeRespawner();

}

public async Task ResetDatabaseAsync() => await respawner.ResetAsync(dbConnection);

private async Task InitializeRespawner()

{

await dbConnection.OpenAsync();

respawner = await Respawner.CreateAsync(dbConnection, new RespawnerOptions()

{

DbAdapter = DbAdapter.SqlServer,

SchemasToInclude = new[] { "dbo" }

});

}

async Task IAsyncLifetime.DisposeAsync()

{

await dbContainer.DisposeAsync();

}

}

There’s quite a lot happening here:

- It creates a SQL Server database in docker using the Testcontainers library.

- It uses Grate, another great open-source project, for database migrations.

- It initializes an

HttpClientand exposes it as a public property. You can use this in all your test files without needing to create a new instance in each file. - It initializes Respawn using its

Respawner.CreateAsyncmethod. It accepts a database connection object which allows the Respawn utility to connect to your database. This method also accepts some initialization options to:- Include (or exclude) one or more schemas

- Include (or exclude) one or more tables

- It exposes a public

ResetDatabaseAsyncmethod that you can call from your individual test files to run the database reset.

Bringing It All Together

With all that setup in place, go ahead and write you integration tests. Let’s look at one of my test files for review:

using FluentAssertions;

using RespawnDemo.Api.Employee;

using RespawnDemo.IntegrationTests.Shared;

using System.Net;

using System.Text.Json;

using Xunit;

namespace RespawnDemo.IntegrationTests;

[Collection("EmployeeDbCollection")]

public class GetAllEmployeesTests : IAsyncLifetime

{

private readonly string databaseConnectionString;

private readonly Func<Task> resetDatabase;

private readonly HttpClient client;

public GetAllEmployeesTests(EmployeeApiFactory apiFactory)

{

databaseConnectionString = apiFactory.DatabaseConnectionString;

this.client = apiFactory.HttpClient;

resetDatabase = apiFactory.ResetDatabaseAsync;

}

[Fact]

public async Task CanGetAllEmployees()

{

var employeeFactory = new EmployeeFactory(databaseConnectionString);

employeeFactory.CreateRandomSamplingOfEmployees(10);

var response = await client.SendAsync(new HttpRequestMessage

{

Method = HttpMethod.Get,

RequestUri = new Uri($@"http://localhost:7050/employees")

});

var result = response.Content.ReadAsStringAsync().Result;

var employeesFetched = JsonSerializer.Deserialize<List<Employee>>(result, new JsonSerializerOptions

{

PropertyNameCaseInsensitive = true

})!;

response.StatusCode.Should().Be(HttpStatusCode.OK);

employeesFetched.Should().NotBeNull();

employeesFetched.Count.Should().Be(10);

}

public Task DisposeAsync() => resetDatabase();

public Task InitializeAsync() => Task.CompletedTask;

}

A few things to note:

- This class is annotated with the

[Collection("EmployeeDbCollection")]attribute. That’s what glues this class to theEmployeeApiFactory. ResetDatabaseAsyncis called in the DisposeAsync method, effectively resetting the database after the tests in this file are completed.- Take a look at all the other test files in this repo; they follow this same basic pattern.

Closing Remarks

In this post, I’ve presented an alternate approach for Integration Testing – share a single database for a group of tests spanning multiple files, as opposed to using a brand-new database for each test file. Try this in your integration tests to see if it will aid in reducing the overall time in running your test suite. Remember to check out the companion repo from my GitHub, here: